Summary

Roland Dubois and I are continuing our explorations of WebXR Accessibility. We’ve made an interface that is keyboard accessible, screen readable, and voice controllable (see “Does WAI-ARIA even work with WebXR?“), and we considered color contrast ratios and readability in XR (see Can You Identify Which Color Combination has the Greatest Contrast?“). Now, we are focusing on the presentation of text alternatives on 3D Objects in XR for people who are blind or low-vision.

In an accessible world, we know that objects presented in a digital environment must have a text description as an alternative for people who cannot adequately perceive their visual information. This is a fundamental building block of accessibility on the web. We believe WCAG 1.1.1 Non-text Content already applies to 3D objects in WebXR. A large majority of web frameworks and web-based SDKs have text alternatives built into the core of their code stack. Unfortunately, frameworks to create immersive content fail to automatically supply text alternatives for 3D Objects used in their rendered XR applications. Every XR developer that implements an accessible text alternative in their project will have to start from scratch, since there is not a built-in standard like in the aforementioned 2D web frameworks.

Here are some WebXR authoring platforms that need to provide a text alternative authoring solution:

Prototype Goal

Our intention is to determine if sound notifications triggered by a hand-controller can be used to help people locate the position of a 3D object in relation to their body’s position. We enabled the text alternative to be read through synthesized speech by pressing the controller’s trigger.

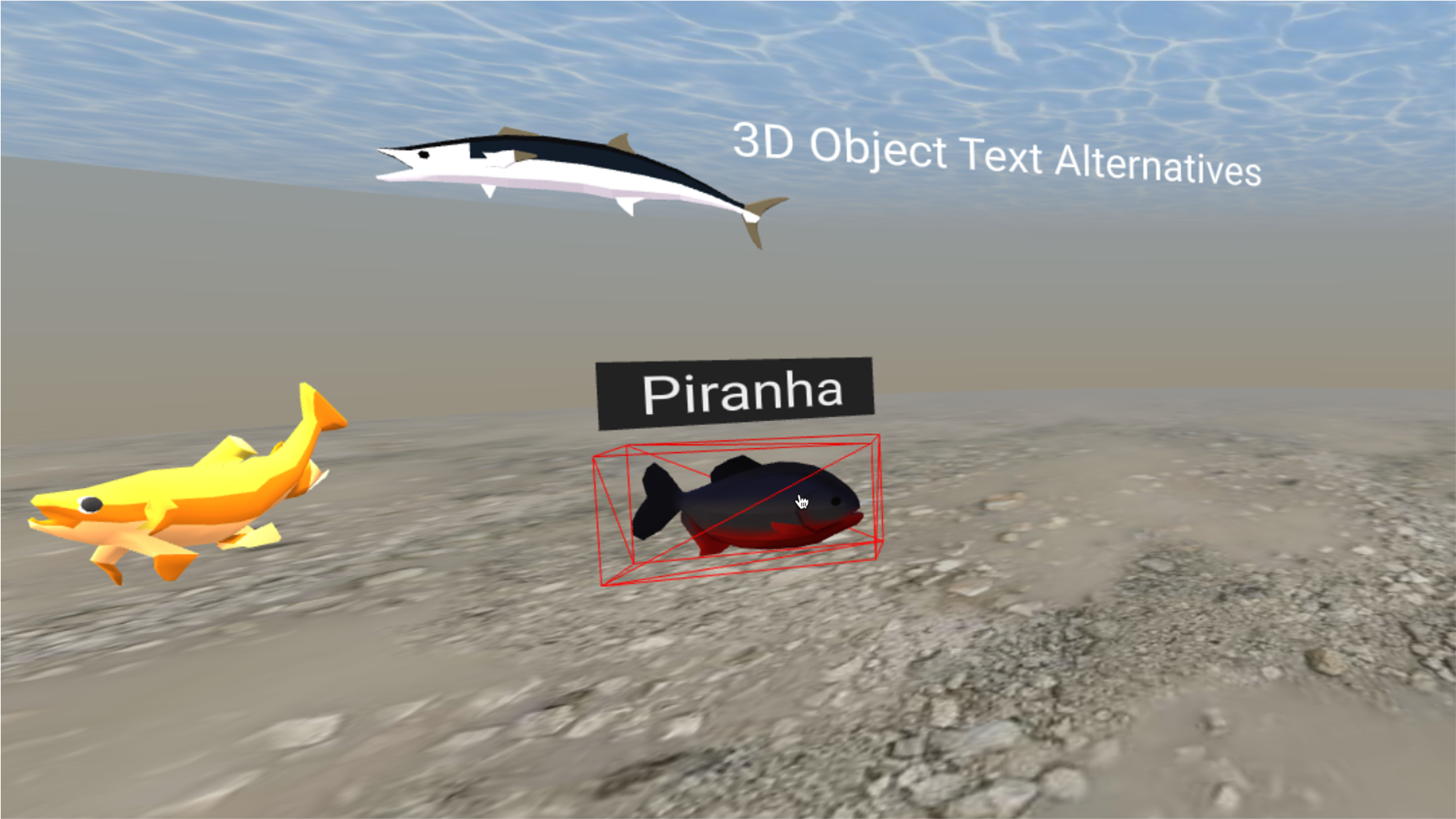

A randomly loaded sequence of five fish in a virtual aquarium experience labeled “WebXR – Text Alternatives for Fish” is the user’s playground. We wanted to set up a task for the user such as “Find the fish that is farthest to the left in your field of vision” or “Find the fish that is swimming closest to the surface in the environment”. These tasks require processing of spatial information presented within the virtual environment. We want to analyze in this experiment how sound notifications can be used with a hand controller to determine when a virtual object is in or out of the user’s focus.

Our current ideas are to train using the following UI Gestures:

Left to Right Horizontal Wipe

Left to Right Vertical Wipe

Technical Details

We considered making these objects directly accessible through using ARIA – Accessible Rich Internet Applications, but given our previous articles we understand that this will not be possible. We then decided to work on making our application self-voicing. We tried to use WebSpeech API, but this does not work on the Oculus Browser. In the end, to add speech synthesis we relied on technology from ResponsiveVoice.org.

Hello @OculusGaming and @oculus what is the best way to log a public bug against your software? Right now experiencing #a11y issues with Browser

— Thomas Logan / Techトーマス (@TechThomas) February 8, 2021

Sourcing the Assets

We used the following assets from the Google Poly Store. The Google Poly Store is going away on June 30, 2021. 🙁

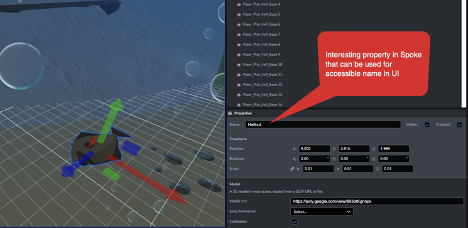

Here is how to add assets from Google Poly to an A-Frame project:

- Download .glb file from Poly.

- Size the object to a depth of 1 and get the height and width values that allow for that sizing.

- Rotate the fish so that they are all facing the same direction.

- Using the glow plugin , size the focus rectangle for the element so that it displays properly.

- Make sure the text faces the label.

Considering Text Labels as Tooltips

Visual tooltips as text labels for objects are important to ensure that people who are Deaf or Hard of Hearing can identify the objects in the virtual world. We decided that we wanted to have visual labels when you hover over the element in our UI to assist people who are low-vision.

Observations

Much like an iPhone design, we believe a single click on the hand controller to have the text alternative read out for a 3D object should be equivalent to a single tap on the mobile screen for reading the text alternative for a focused object. We believe the design patterns should allow for the same idea of a double tap being used to take an action on a 3D object when a screen reader is running.

Conclusion

This current experience uses visual tooltips as well as sound notifications for object exploration inside of a virtual world. By exposing a sound notification when an object crosses into the pointer’s raycaster, we signal the presence of an actionable object. Once the user presses the trigger, the object’s text alternative, visualized in a tooltip, will be announced through synthesized speech. We also believe that an alternative sound notification is necessary when the object leaves the focus of the pointer’s raycaster. By having distinct sound profiles for the raycaster’s intersection while entering and exiting the object, we enable an audio-only interface. This allows the exploration of spatial context to an inclusive non-visual audience.