Summary

Close your eyes and visualize a loved one. Can you see the person’s facial features? Can you visualize them with eyes open, closed, or both? Someone who experiences extremely vivid imagery may have a condition called hyperphantasia.

For those like Joe Devon who cannot create a mental image in their minds, it’s aphantasia. People who have aphantasia don’t realize they have this. Joe would like to be able to visualize his parents. Is the lack of being able to create mental images a disability?

Do you have a monologue with yourself using your mind? It’s like an inner voice. Some people don’t have that inner voice. Joe wonders how people without an inner monologue think. How does it affect their lives? Is the lack of an inner monologue a disability?

The concept of disability is narrowly defined. It doesn’t consider the inner world that we don’t know we have or don’t have.

The Five Senses or Is It?

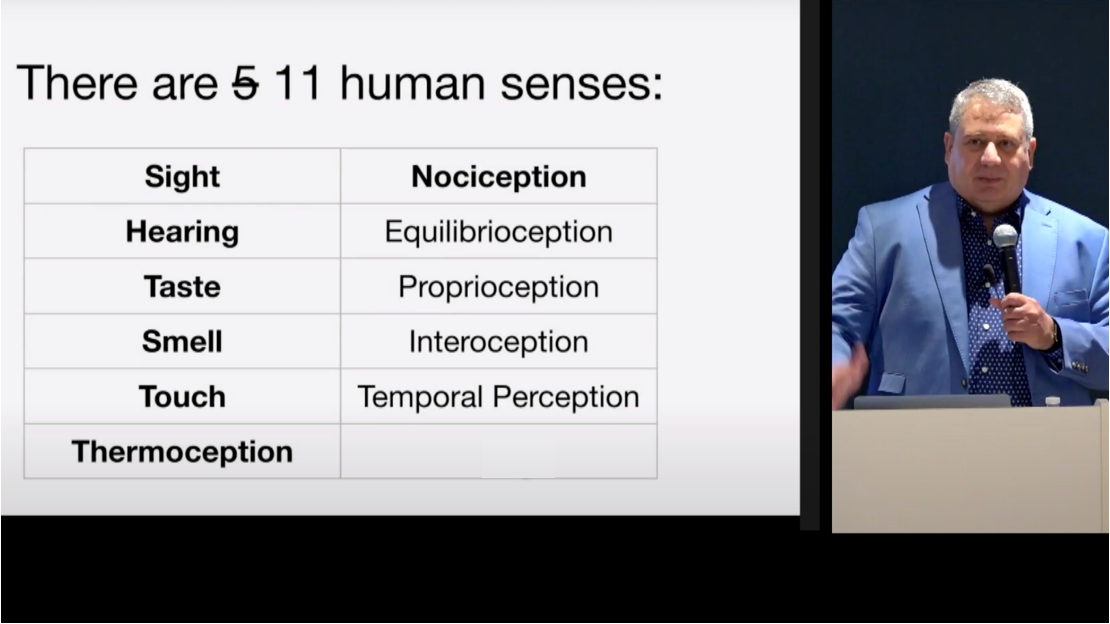

Joe says that artificial intelligence (AI) made him rethink the meaning of disability. Most of us grew up learning about the five senses. Smell, taste, see, hear, and touch. But there are 11 senses. Nociception is the ability to imagine pain. Thermoception is the ability to sense heat or temperature.

Equilibrioception is the sense of balance and spatial orientation. Proprioception is the sense of self-movement and body position. Interoception is the ability to sense what’s going on with a person’s own body, such as feeling hungry, thirsty, or the need to go to the bathroom. Temporal perception is having the sense that time has passed.

There are subsenses like color perception. Most humans see color in wavelengths that correspond to red, green, and blue (RGB). Most humans have three color receptors. X chromosomes have two color receptors. Y chromosomes have one.

This is why men tend to have a higher percentage of colorblindness than women. A very small percentage of women have four receptors and RGB is not enough for them as they can see 100 million colors. People with three receptors can only see 1 million colors.

Then there’s memory. About 200 people in the world have hyperthymesia, which means they remember everything. Except for those 200, everyone is memory impaired. But that’s not referred to as a disability.

AI and Accessibility Are Related to Each Other

Artificial intelligence tries to take sensory input, understand it like a human, and translate it. Aside from this, what is artificial intelligence trying to do?

If people want to get good at researching and working with artificial intelligence, then they need to work with people with disabilities to get the full picture. It will also help provide better assistive technology. AI and assistive technology feed into each other.

Most of the good firms working with AI for assistive technology involve people with disabilities during the development of their product. OpenAI contacted Be My Eyes to ask them if they would be their launch partner for the GTP4 launch.

OpenAI was working with multi-modal technology. This means using different inputs such as sound, pictures, and text. They tested the technology with people with disabilities and launched with Be My Eyes.

One aspect of this is automated speech recognition. This is the sensory input of spoken speech. Originally, technology was taking the sounds and mapping them to words. But as people who have experienced automatic captions know, there are a lot of mistakes. This could be because the speaker is mumbling or mispronouncing a word.

As a result, a new concept on natural language understanding. AI is going to try to understand the inputs from images and videos and work to do a better job with the translation. When it improves, this will be great for assistive technology. One example is taking audio and creating a transcript that can be translated into multiple languages.

The other challenge of AI is that it only understands people with standard speech. Speech-to-text accuracy tends to be better when it’s a native English speaker without an accent. A person with an Australia accent will see more errors than someone with a plain American accent even though they’re both native English speakers.

For AI to synergize with accessibility, it must involve people with disabilities. There are people with disabilities who don’t have standard speech that the majority has. Google has found that 250 million people have speech disabilities or non-standard speech.

Google created Project Relate to collect voice samples from a diversity of people with a variety of non-standard speech. Involving disabled people helped the company improve the technology’s ability to understand and translate speech and mapping them into words.

AI Will Personalize Experiences

AI will generate reality in real time and in the right format. For a blind person, AI will transform inputs into audio. For a deaf person, it’ll take sensory input and turn them into visuals. And for the deafblind, input will turn into haptics.

Where is technology going? In the past, the first portable phones were called brick phones. They were heavy and solid. While it was cool, it needed to get smaller. The smartphone is smaller and many people view it as the perfect size and weight. But it’s not. Eventually, everyone will look back at today’s smartphone in the same way they reflect on the brick phone.

Another example is spatial computing. It’s amazing but the form factor of the headsets is bulky. They can tire a person. That said, what does the new technology stack look like? Brain computer interfaces (BCI) will be the new input devices.

Andreas Forsland, CEO of Cognixion, founded the company because he wanted to find a solution to help people like his mom who had locked-in syndrome to communicate their wants and needs through a headset that connects to the back of a person’s head. This is going to be the new input device that allows a person’s brain to give commands.

If another company was working on this type of product and didn’t involve people with disabilities, would they succeed? No.

Using Senses to Augment Other Senses

Can you imagine tasting vision? There is a brain port has a camera that takes a video. Then, then it translates that into electric signals on a mouthpiece that you put on your tongue. The tongue contains a lot of nerves. The brain can see the video through the tongue. It sounds like the stuff of science fiction, but it works.

When you think about it, when you are looking, your eyes are taking in the color and the light. They’re getting to your brain. The brain is completely dark. The colors come through electrical signals.

By the same token, can you feel sound? Check out the TED Talk by David Eagleman where he wears a haptic voice. A haptic vest wired to sound can hear without ears using “sensory substitution.” Eagleman took a haptic vest and attached it to the audio of a smartphone. Every sound that came through the smartphone created haptic touches on the vest.

Eagleman did not give the deaf tester any instructions. After spending one hour a day for four days, the person went to a whiteboard. Someone said a word behind him. The deaf person wrote it on the whiteboard. He was able to hear the words through haptic touches on the vest.

Applying the same concept, Eagleman put the haptics into a Neosensory wristband that helps with people who have tinnitus. People with tinnitus have hearing loss and the brain is trying to fill in the blanks. The bracelet takes those sounds and turns them into touches. This helps the brain understand what’s going on and cure the tinnitus. It’s this sensory substitution that’s going to power new output devices.

There is a TED Talk by Imran Chaudhri, a former Apple designer, who envisions a future where you can take AI everywhere by projecting the user interface on the hand. Wearables is where the future is going. Computers will be invisible, personalized, and accessible. They will have haptics to supplement and augment the senses. The winners will have accessibility in their secret sauce.

However, the key is not to assume someone else will build these. Everyone will build it together. Accessibility will mean more than disabilities. It will mean augmenting everyone’s abilities. Accessibility is for everyone.

Video Highlights

Select any of the bullets to jump to the topic on the video.

- Expanding on the five senses

- Artificial intelligence and accessibility are synergistic

- Where is new technology going?

- Cross-sensory perception

- Q&A with Joe

Watch the Presentation

Bio

Joe Devon is Head of Accessibility and AI Futurist at Formula.Monks. He is a technology entrepreneur and web accessibility advocate. He co-founded Global Accessibility Awareness Day (GAAD) and serves as the Chair of the GAAD Foundation, focusing on promoting digital accessibility and inclusive design. Joe also explores AI’s potential to revolutionize digital accessibility.

“Most humans have three color receptors. Women have two because they have two X chromosomes.”

This sentence seems wrong in so many ways!

First of all the sentence implies that “most humans” are biologically male ! Which in absolute terms is not true.

Secondly, both women and men have three colour receptors, regardless of their sex chromosomes. The number of colour receptors is determined by genetics and is not directly related to the number of X chromosomes a person has.

Helena, thank you for clarifying. The text comes from the accompanied video. It was a lot of information. We’ve corrected the sentence to match what the video said.

This is such a fascinating vision for the future of AI and wearables! The idea of projecting interfaces onto our hands and seamlessly integrating technology into our senses is mind-blowing. I love the emphasis on accessibility—not just for disabilities but for enhancing everyone’s abilities. The future of computing is looking incredibly intuitive and inclusive!

Thanks for the great comment!