I finally got a sign it was time to upgrade from an iPhone 7 Plus. Of course, I like to use the latest technologies. But I also like to stretch my dollars. So, I drive cars (2008) and use technologies for as long as possible.

What was the sign? Two words: Live Captions (beta). The catch? Live Captions would only be available for iPhone 11 and later. No way was I going to miss out on Live Captions.

Here are the models that support Live Captions:

- iPhone 11 and later

- iPad mini: 5th generation and later

- iPad: 8th generation and later

- iPad Air: 3rd generation and later

- iPad Pro 11-inch: all generations

- iPad Pro 12.9-inch: 3rd generation and later

As of this time, Live Captions are only available in the U.S. and Canada.

What are Live Captions? They’re automatic captions that use your iPhone’s microphone to add captions for any speech it hears in the world around the iPhone or inside the apps.

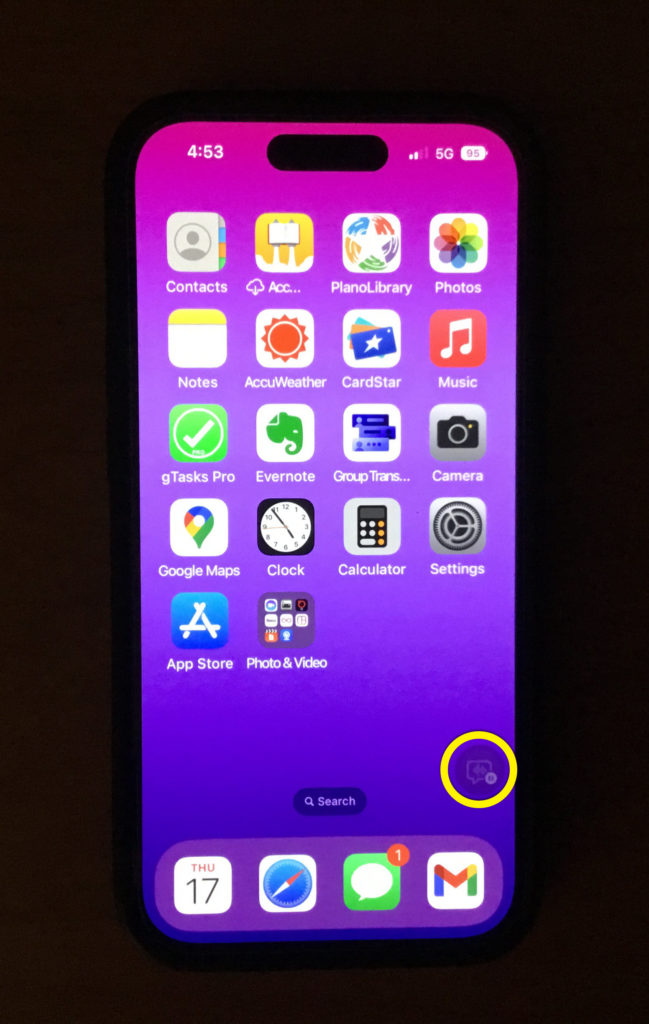

Playing with the Live Caption Button

As soon as the lightly purple iPhone 14 Pro arrived, the first thing I did was turn on the Live Captions (beta). Yes, it shows “beta” in the name. Apple took a progress-over-perfection approach with the Live Captions.

They could’ve waited a long time until the captions worked well before releasing it. Instead, they released it sooner with a note that it’s in beta. Letting people know it’s in beta makes them more forgiving of its bugs.

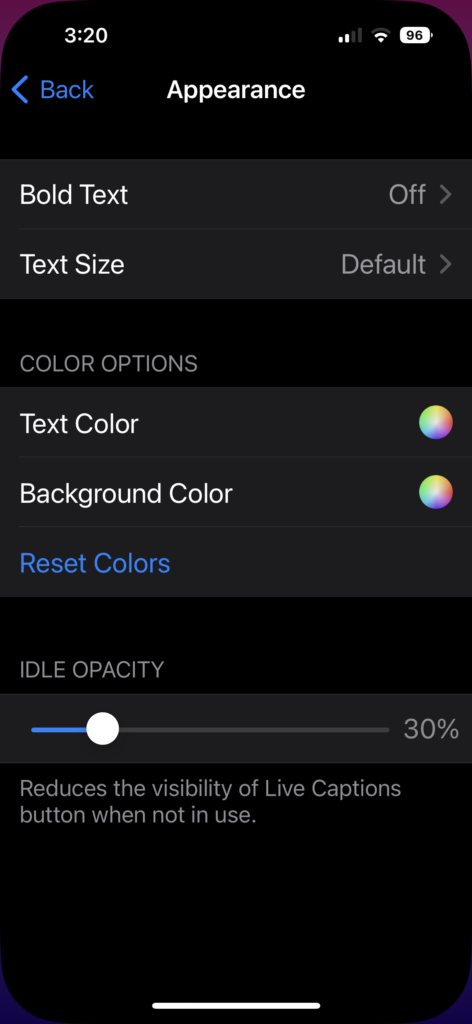

When you turn on Live Captions, it sits on your screen as a button. You can move the button around the screen. You can also control the transparency of the button. The following image shows a screenshot of the Live Captions settings with the button’s opacity set to 30%.

The following image is the same screenshot except the button’s opacity is set to 100%.

After playing with the shiny new phone for a while, the button started bugging me. No matter where I put it, the button got in the way of the content behind it. Decreasing the transparency didn’t help as the appearance of the button remained distracting.

Then a proverbial light bulb went off above my head. Turn Live Captions into a shortcut.

Creating the Accessibility Shortcut

Even on the ancient iPhone 7 Plus, I used the Accessibility Shortcut for easy access to the Color Filters and Magnifier. I’d press the Home button three times for the Accessibility options to pop up and then select the one I wanted.

This also works on the iPhone 14. Instead of the Home button on the bottom of the screen, you press the right-side button. While I don’t have hand dexterity issues, I could tell pressing the button three times felt like a strain. Pressing required a little more force than the old Home button.

Fortunately, there is a better way that requires less effort. Two ways, actually. One way may not be available for all phones. For example, tapping the back of the vintage iPhone 7 Plus did nothing. It didn’t have this capability. The iPhone 14 does.

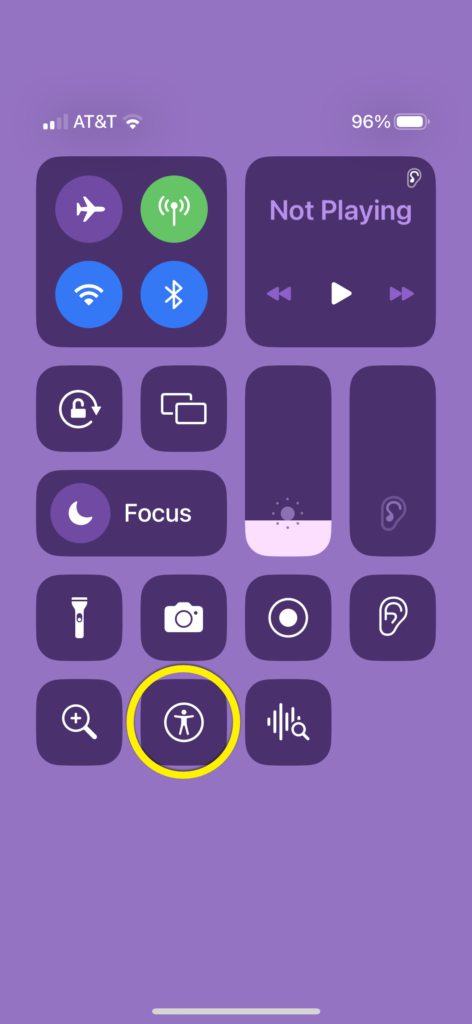

Here are two ways to open the Accessibility shortcuts. You can pull down the Control Center or tap the back of the phone twice or thrice (depending on the setting) to open the Accessibility shortcut options.

First, add Accessibility Shortcuts to the Control Center. Select the following:

- Settings

- Control Center

- + next to Accessibility Shortcuts.

Next, add Live Captions to the Accessibility Shortcuts by selecting the following.

- Settings

- Accessibility

- Accessibility Shortcut

- Live Captions

- Back

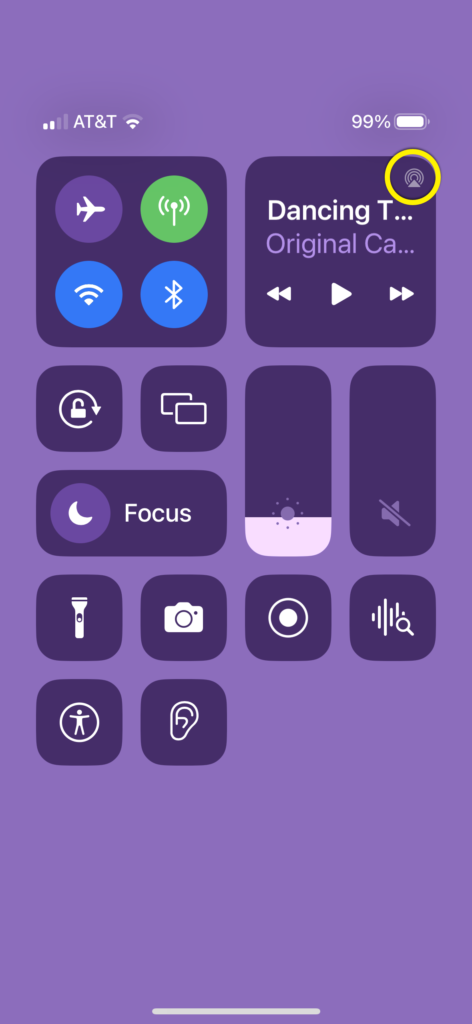

And you’re set. Now it’s available as a shortcut whenever you open the Control Center as the following image shows. In the upper-right, swipe down to select Accessibility and Live Captions.

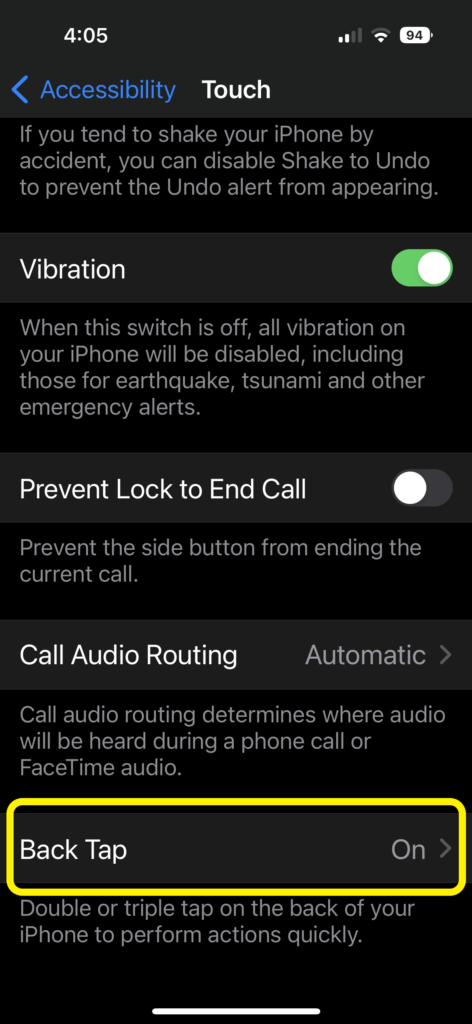

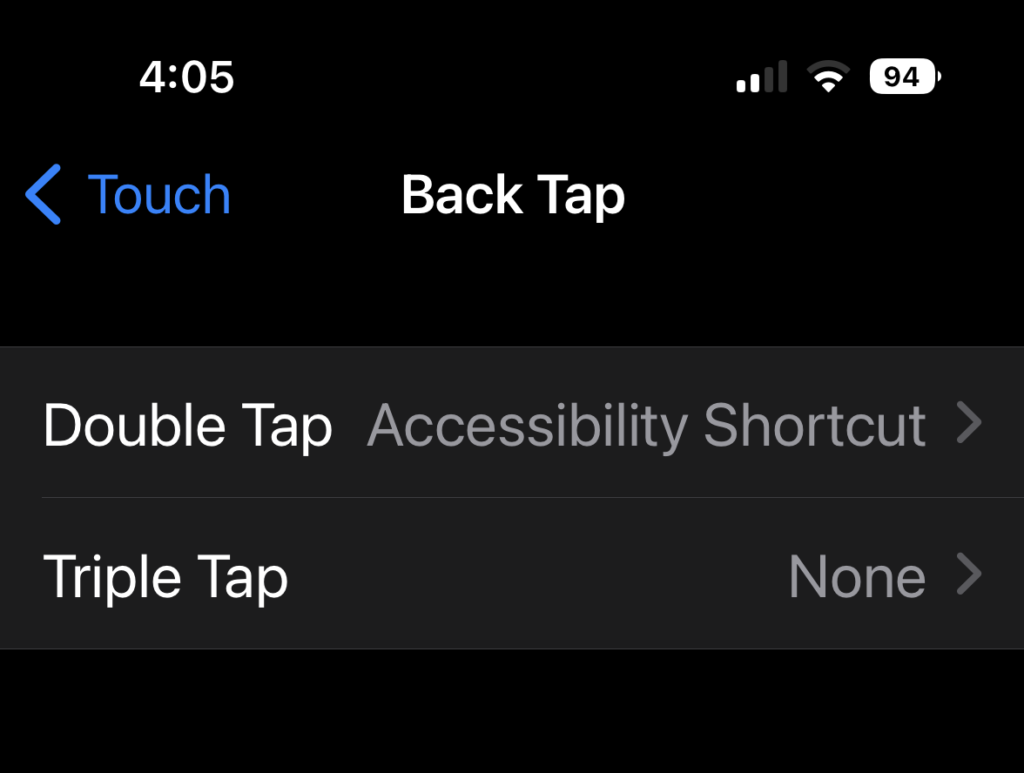

You can access the Accessibility Shortcut through Back Tap if it’s available on your iPhone’s model. Select the following to use Back Tap for Accessibility Shortcuts:

- Settings

- Accessibility

- Touch

- Back Tap

- Double Tap or Triple Tap

- Accessibility Shortcut

Here’s the option to add Back Tap to Accessibility Shortcuts.

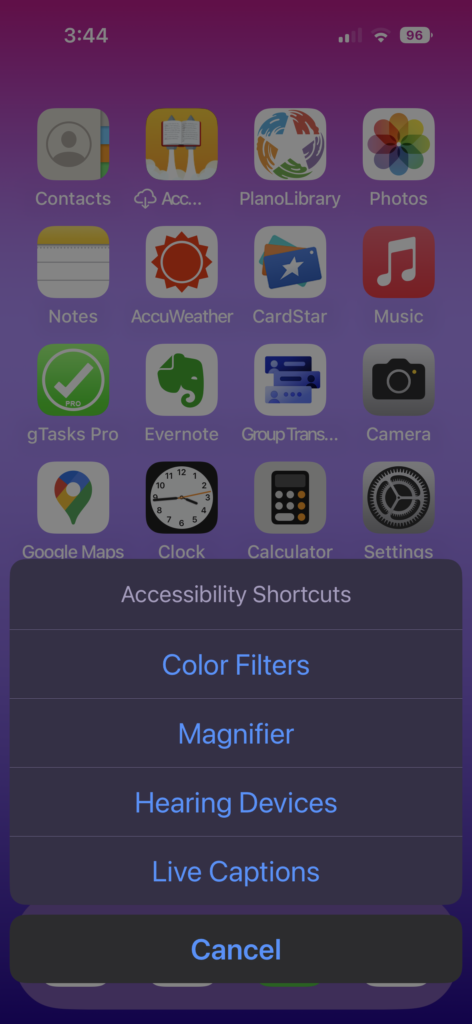

Tap the back of the phone twice or thrice depending on the setting you chose for Step 5. The menu pops up from the bottom with Live Captions listed.

Taking the Live Captions (Beta) for a Test Ride

You can move the Live Captions button wherever you want. To use it, select the button. If you want to caption something playing on your phone, deselect the Microphone icon. If you want to caption the world around you, ensure the Microphone icon is selected.

Press the pause button to start the captions or to stop them. There is a button with two arrows pointing in the opposite direction. Tap that to expand the captions to full screen.

Here are the Appearance settings you can customize for Live Captions:

- Bold (off / on)

- Text size

- Text color

- Background color

- Idle opacity % (controls visibility of Live Caption button when not in use)

The Live Captions settings are confusing. First, if you want them to work for all apps and around you, then make sure the in-app live captions are turned off for FaceTime and RTT. However, it wouldn’t work with any of the videos playing on the phone.

After a bit of work, I figured out why. The iPhone connects to my cochlear implant. Because of this, the Live Captions aren’t getting any sound. Therefore, it wasn’t captioning anything. I could not change the microphone to point to the iPhone’s speaker instead of my bionic ear.

I switched to a different cochlear implant (backup) that doesn’t connect to the phone. That worked.

There are two ways to fix it. One is to switch the playback sound from the ear to the iPhone’s speaker. This is not intuitive. You have to go to the player options to switch the playback destination. For me, the Ear icon showed up instead of the triangle with three partial circles above it as shown in the following image.

The second way is to turn off Bluetooth. This disconnects the sound from my cochlear implant.

By the way, you can’t record or take screenshots of the captions or the button. They’re like a vampire — they don’t show up in videos or pictures! This is most likely due to concerns of privacy for people on the other end of the conversation or copyrights when it comes to other audio. So, I had to record this video with another device.

The following video shows how to use the Accessibility Shortcuts and Live Captions.

Video description: Hand interacting with iPhone. Pulls down the menu to select Live Captions from Accessibility Shortcuts. Moving Live Captions window and unpausing microphone. Expanding Live Captions window to full screen. Moving the Live Captions window up and down. Changing the window back to a button and closing the button with the Accessibility Shortcut.

I tested it on my TEDx Talk through the YouTube app. Automatic captions aka autocraptions still don’t like my accent. The captions were bad. YouTube’s original automatic captions were noticeably more accurate than Live Captions. The captions on the talk now contain human captions as the next video shows. (The next video contains open captions.)

Video description: iPhone playing Meryl’s TEDx Talk with Live Captions and YouTube captions showing. YouTube captions are accurate whereas Live Captions are not.

The verdict isn’t out yet on the quality of the captions that don’t come from me. Apple makes it clear that it’s in beta. I appreciate they released it in beta as I’d rather get it sooner with bugs than wait a year until they’re perfect.

Facetime Captions

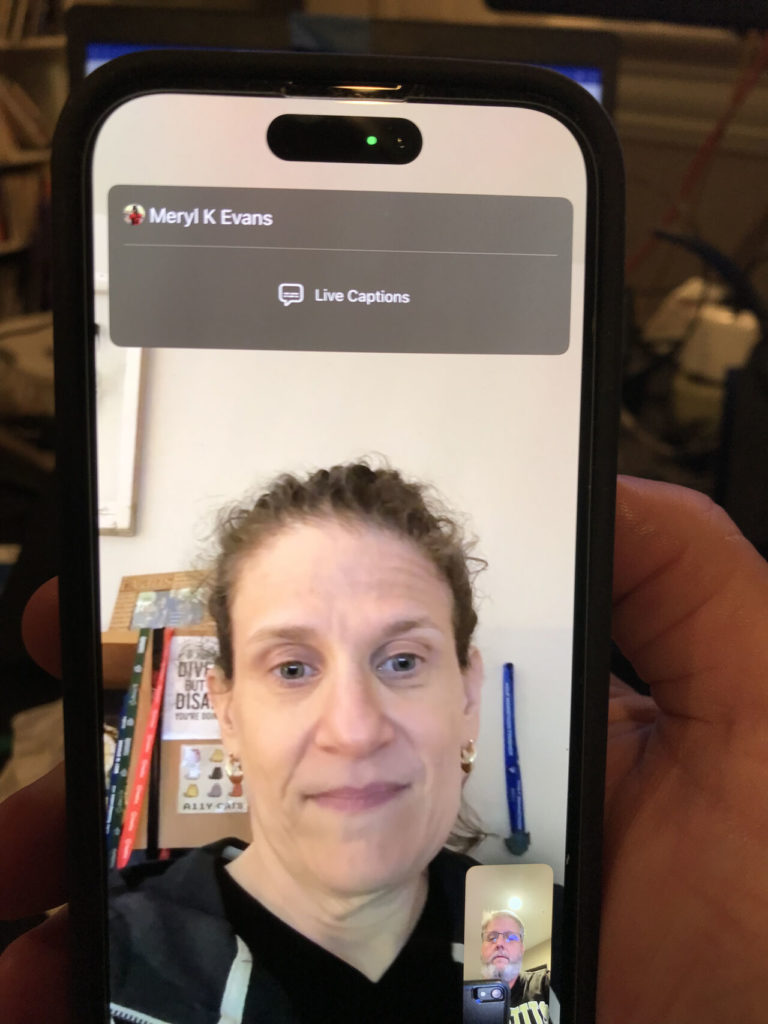

You can use the Live Captions with Facetime. However, Facetime comes with its own built-in captions. If you opt to use Live Captions only with FaceTime, it will put the captions up top. You wouldn’t need the Live Captions button.

I hadn’t done a FaceTime call in more than a year. With Live Captions in iOS 16 and upgrading from an iPhone 7 to an iPhone 14, I gave FaceTime another try. My mom had fantastic timing. She asked if we could FaceTime because she had a lot of updates. It gave me the opportunity to test the captions.

It turned out my phone had two different captions turned on. One was FaceTime’s in-app captions, which show on top. The other was the iOS Live Captions. FaceTime’s in-app Live Captions worked a lot better than the iPhone’s Live Captions. Why the quality of the captions between FaceTime in-app and iOS live captions are different, I don’t know.

During the conversation with my mom, the FaceTime in-app captions stopped working within five minutes. Fortunately, my mom is one of the easiest people for me to lipread. I rebooted my phone and did another test of FaceTime in-app captions. They worked, but we talked for under 5 minutes. Yet another time I tried them, the FaceTime captions would not show up.

I wanted to get a photo of the captions for this post, so I used FaceTime to call my spouse. This time, FaceTime’s in-app top captions didn’t work on either end of the conversation. The following image shows the captions box on top with no captions.

Since Live Captions is beta, it’s going to be buggy. Still, it’s perplexing why the top captions didn’t work at all. I can understand their stopping in the middle of the conversation. Many other live caption apps have done that.

I’ve submitted a request to Apple to please allow us to move FaceTime in-app captions. You can move the iOS captions, but not the FaceTime in-app captions. I can’t read lips when the captions are on top. Or I can’t see the captions because I’m reading lips. For now, I won’t be using FaceTime for a call with anyone other than my mom.

It’s about time Apple made Live Captions available. As expected, they’re very buggy. That’s why it helps to create a shortcut. I don’t use them enough to have the button there at all times. I want to give a shout-out to Apple for releasing the beta of Live Captions rather than waiting until it’s perfect. That’s progress over perfection.

You might also be interested in these posts.

- Captions: Humans vs Artificial Intelligence: Who Wins?

- Augmented Reality Captioned Glasses

- Why and How to Caption Videos Folks Love

Does Your Website or Technology Need to Be Audited for Accessibility or Get a VPAT?

Equal Entry has a rigorous process for identifying the most important issues your company needs to address. The process will help you address those quickly. We also help companies create their VPAT so they can sell to the government or provide it to potential clients who require it. Contact us about auditing and creating VPAT conformance reports.

Well, I too have a cochlear implant, with a new bluetooth enabled sound processor, and I just upgraded to iOS 16 and discovered Live Captions. But i gotta tell you, your comments about CI were VERY confusing. and on top of that , the article would NOT print and I could NOT copy it! I how it hasn’t ruined my experience with Live Captions! For the first time, I can HEAR on my iPhone and now SEE captions! I’m overjoyed. And I’m very disappointed in your comments, because they made no sense to me. Especially since my OLD “bionic ear” was not bluetooth enabled, and I should be able to connect with you, BUT!

Hi Virginia. Thanks for taking the time to comment and share your thoughts. First, Live Captions have improved a bit since I wrote this post 10 months ago. Technology moves fast. My colleague reread the post and didn’t find the part about the older CI confusing. However, I tweaked it and hope it’s clearer. It was a second cochlear implant, my backup. It was not paired with the phone. My main one is paired with the phone. The captions work great with FaceTime and videos playing on your phone. But they still don’t work for live in-person conversations. As for the printing and copying the content, that’s a bug. Please email contact@equalentry.com to report it as it means more coming from readers than me.