Summary

Automatic captions are often the subject of many jokes. They’re also the cause of viewers’ ire. Mirabai Knight wants to change that by educating people on the value of human captions. She has been a professional steno captioner since 2007 who can type up to a jaw-dropping 260 words per minute (WPM)!

Mirabai founded the Open Steno Project. The purpose is to break machine stenography away from the expensive and proprietary locks on the professional court reporting industry. This project allows anyone to learn stenography for free. It introduces Plover, a free open-source stenography program that turns your keyboard into a steno machine.

Hobbyist stenographers have produced affordable steno machines that work with Plover. You can even build your own machine. The website has resources to help anyone learn how to become a stenographer as there’s a need for more human captioners. They won’t be replaced by automatic captioning anytime soon.

The Challenges of Automatic Captions

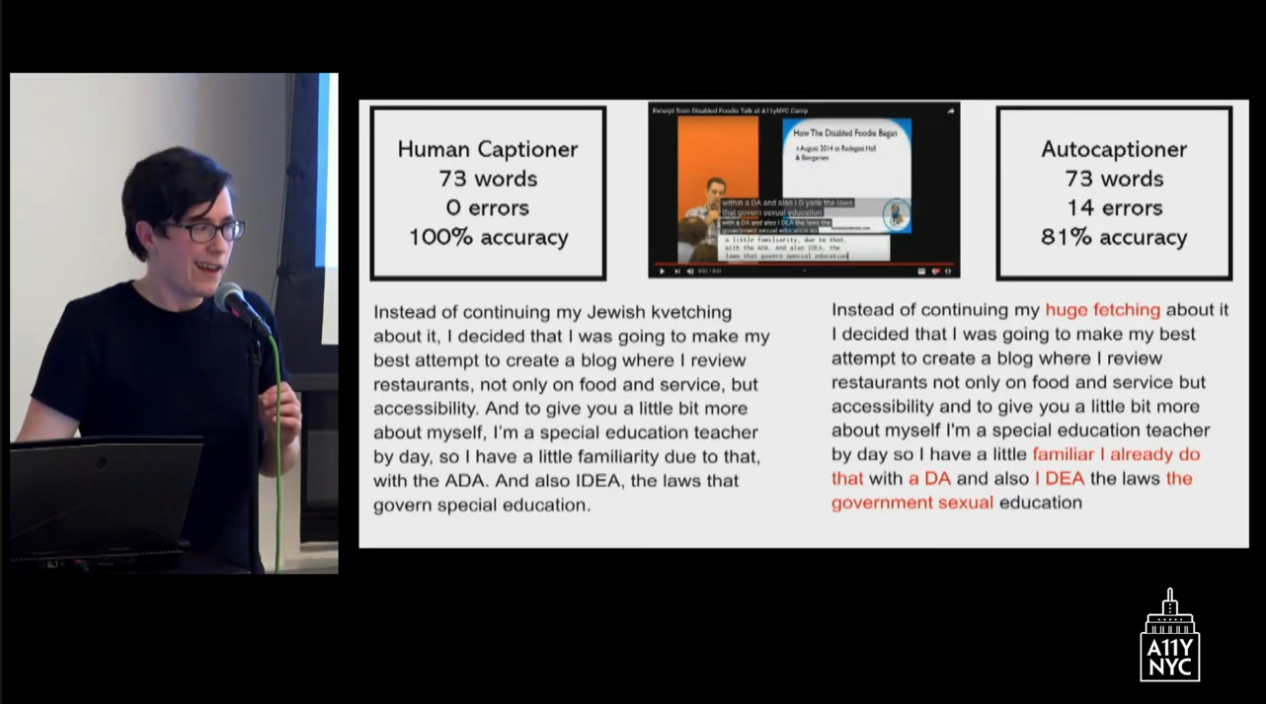

There’s a lot of confusion on what makes an acceptable captioning accuracy rate. Automatic captions like those done on YouTube have reported a 70 to 80% accuracy rate. Does this sound like a good rate? This means there is an average of three mistakes per sentence. The accuracy rate has improved, but it depends on many factors.

Then Mirabai shares something called the 90% = 100% fallacy. This means if someone says that captions are 90% accurate, humans have the tendency to round up to 100% and say it’s perfect. The average sentence contains 10 words. A 90% accuracy rate translates into one wrong word per sentence. This could easily be 10 wrong words per paragraph. This rate would render captions useless. Hence, 90% is unacceptable and cannot be rounded up to 100%.

A 99% accuracy is one wrong word per paragraph. Mirabai strives for 99.99%. Anything less is a bad day. An accuracy rate of 99.99% is one wrong word or omission per four pages.

There’s a thing called the Star Trek effect. This refers to people talking to computers in a conversational manner. Somehow, computers always understand humans in science fiction. Well, except for one scene when Star Trek’s Chekov, who has a Russian accent, struggles to get the computer to understand him.

Nonetheless, real life is not like Star Trek or any fiction. A slight margin of error can create an intensely frustrating experience that can cause people to quit trying.

Advantage: Humans

After a captioned event, people came up to Mirabai to tell her they were so surprised she was doing the captioning. They thought it was artificial intelligence because she’d be fixing the errors as she goes.

It turns out stenography is six times more efficient, more ergonomic, and faster than QWERTY (standard keyboard) typing. Invented in 1911, it’s the fastest method of text entry. Mirabai can type at least 200 wpm with 99.99% accuracy. And do it for eight hours a day without any discomfort.

She explains it’s not the fingers working hard while captioning. It’s the brain. Captioners must hear things correctly, parse them, comprehend them, and filter them through their steno code to get the correct output.

Well-qualified, well-trained stenos understand what people are saying. Computers don’t. Artificial intelligence works to match very complicated and vast databases of probabilistic and fuzzy, logically interconnected words and phrases, sound bites, and Markov chains. But they don’t understand what people are saying in the sense that they can’t guess what someone’s going to say next.

Computers can’t do a sniff test to run every single word it’s outputting through a filter of “Does this make sense?” Humans can do this. Stenography is 100% deterministic. It’s a matter of the stenographer understanding what the speaker is saying and then deciding what that’s supposed to look like in the text. That’s something that computers can’t do.

Punctuation matters! It speeds reading comprehension. AI often fails on punctuation. YouTube’s automatic captions are notorious for the lack of punctuation.

Humans also have the ability to suddenly understand what someone is saying in a subject matter they don’t know a lot about. Humans can comprehend more because we understand the story the speaker is telling. Computers don’t have this ability.

Have you ever tried to do a search for a two or three-word phrase from a document you’re reading that seems unusual? Search for that phrase and the document will most likely be the only hit.

The Complexity of Captioning

The recombination of individual words in this language can be so multivarious and so unique in each particular circumstance that it’s very difficult to map that probabilistically. And it’s very difficult for an algorithm to get a better sense of what it’s captioning over a single half-hour session, but a human can, and that’s important.

Ensure that any captioner you hire is trained as an actual real-time captioner not as a court reporter. Well-qualified captioners with good general knowledge about a variety of subjects, they’ll produce high-quality captions in a variety of circumstances.

Algorithms vary from job to job. When the audio quality drops down a little bit and you increase the number of unfamiliar jargon that shows up fifth or sixth in the artificial intelligence’s list of possible matches rather than first or second. When you introduce an accent, accuracy dips further in an unpredictable way.

Humans misspeak and don’t always articulate. Factor in background noise or music and it gets harder for AI to be accurate. Furthermore, people pronounce words in different ways based on where they’re from. English is filled with many homonyms or words that sound alike but are spelled differently. It’s hard to nail down all of these possibilities into a simple binary output. Humans are good at this.

Automatic Captions or Human Captions

There’s a tremendous amount of cognitive fatigue in trying to take imperfect audio and synthesize it with your human semantic understanding of what the speaker is saying. And come out with clear and complete ideas from that lossy audio stream.

Questions to ask to determine whether to use AI to caption an event.

- Do all speakers have standard American accents? Many AIs are trained in the baritone male voice and have problems with female speakers and non-American accents.

- Do you have a professional audio visual (AV) team monitoring audio? Will you have crystal clear audio with no background noise as well as top-notch internet connectivity?

If you answer no to either of these, automatic captions won’t work well.

Also, people need to consider the stakes of captioning failure.

- What if an important word is wrong?

- What if the overall accuracy tanks?

- Is lag or connectivity a factor?

How important is it that the people in your audience or the person who you’re captioning for a job interview or the person sitting in their college lecture to get a full and accurate text? If they miss one word because the algorithm is 99% correct … but that one word in the paragraph that’s wrong … it could be the most important word in the paragraph. Does that mean the student fails the test or someone doesn’t get the job? Sometimes yes. Sometimes no. This is a question you must answer.

Let’s say you have been getting great speakers and high-quality automatic captions. Then suddenly someone comes in with a slightly unorthodox accent and accuracy falls from 99% to 80%. What do you do?

Can you just toss their whole speech out and say that you got 8 out of 9 talks at the event? That one talk was incomprehensible and confusing, so does it matter? Is lag a factor? Is the person using the captions using them for interaction? Are they able to answer back to the speaker? Are they expected to answer questions? Or complete a pop quiz?

It’s really important to consider that real-time interactivity is something that a human captioner sometimes has a real advantage over auto-captioning.

The Future of Captioning

What does the future look like for captioning? Demand for captioning is definitely skyrocketing as social media and platforms have added captioning support. Many people who use captions are not deaf or hard of hearing. Captions offer multi-modal input for people who want to read and listen to TV at the same time. It’s more comprehensible and less fatiguing.

Baby boomers are in their twilight years. Many have gone to rock concerts and stay in the workforce longer. Their hearing has most likely changed. They’re embracing captioning.

The cost of captioning

But and this is a big one. What about the cost of captioning? There aren’t enough steno captioners to provide good quality captions for everyone who needs it. There are only 400 certified real-time captioners in the U.S. Even if Mirabai could caption 24 hours a day, she would not be able to make a dent in the number of people who wanted or needed captioning.

She admits she’s more expensive than an algorithm. Like everyone else, she has to feed her family. She tackles the elephant in the room. There’s just not always money to pay a human captioner. She expects to see a trend of companies and events saying you can have the captions but only on your smartphone, not on the big screen. Or that it’s available on request, not proactively.

Open captions are going to be important to provide widespread broadband access to as many people as possible. One in 15 people is deaf or hard of hearing. If you narrow that down to age 65 and older, then it’s 1 in 7.

What company wants automatic captions spouting “sexual education” instead of “special education” that makes them a laughingstock of the entire internet? They might try to shut it down, but it will find a way to show up in public.

Human captioning could become a premium service. For example, someone is using auto-captioning and the accuracy starts tanking. The viewer could hit the switch to move over to human captioning. Then it becomes the haves get good captioning and the have-nots don’t. It’s an economic disparity.

Another potential trend Is companies saying you don’t need live captioning. Just record the live audio and then send out 30-second snippets to 4 million people on Mechanical Turk (crowdsourcing marketplace) and they’ll correct the auto transcript or transcribe it from scratch. Then, you stitch all those pieces together and voila! You have a beautiful low-cost transcript. This isn’t real-time communication access and it comes with quality-control problems.

Some companies jump into automatic captioning and when it fails, they find out what it’s like to work without a net and go back to human captions.

Combining human and automatic captions

It’s possible human and artificial auto-captioning will work together to some degree. In one scenario, the human captioner will watch the auto-captions and jump in and save the day when its accuracy starts to fall. There may be a way to pair both to work to the benefit of those who depend on captions who will not have full coverage if they only rely on human captioners for everything.

Repairing something that breaks is trickier than getting it right the first time. One prospect is if the human captioner’s transcript is almost perfect save for a few words. The algorithm can scan through it and find the missing words. The human captioner can quickly jump in there, fill in the words, and have a 100% accurate transcript within five minutes of the event.

The human captioner followed by an algorithm check is a more efficient process than having the auto-captioning make a lot of mistakes and then adding on a group of low-paid humans to fix those errors.

For events where speakers may switch between languages, there is software that allows multilingual captioners to quickly switch languages as needed. This will require finding a human captioner who is fluent in the languages as well as have the stenographic capability.

Depending on captions vs. captions by choice

Some deaf people are concerned that if automatic captions become the standard, they won’t be able to convince their employers to pay for human captioning when it comes to important meetings or classes. They worry that people will decide auto-captions are good enough.

People who don’t depend on captions will see artificial intelligence gets a lot of things right. And when it gets things wrong, it won’t matter because they can fill in the gap because they can hear. But those who depend on captions won’t be able to figure it out.

Human captioners, for the time being, can do things that auto-captions can’t do. Humans will always have a place in the captioning industry.