by Sam Berman

Like many people, my first direct, real-world interaction with artificial intelligence was with Siri on my iPhone. It was the first implementation of artificial intelligence that gained any serious, mainstream traction. And yet, at that time, Siri seemed like a novelty; a gimmick Apple pulled from science fiction movies.

The kind of questions I asked it then were sarcastic, along the lines of “Do you love me” or “Why did the chicken cross the road”. I didn’t understand it and didn’t see its potential. It wasn’t until Apple added the ability to find restaurant and movie listings about a year after Siri’s initial launch on the iPhone 4s that I saw the potential.

At the time, smartphone ownership was still new to me. My iPhone made me a more confident solo explorer and traveler. I could find any information I wanted, at any time I wanted, as long as there was a cell signal. Even so, as a one-handed typist, typing on the phone was a challenging, tedious task.

I envied those who could type on their phones without looking since I had to focus all my attention on the screen of my device just to ensure my thumb tapped the correct key. Siri wasn’t there yet, but I had a feeling that once the platform matured, it could deteriorate the remaining barrier between me and my device.

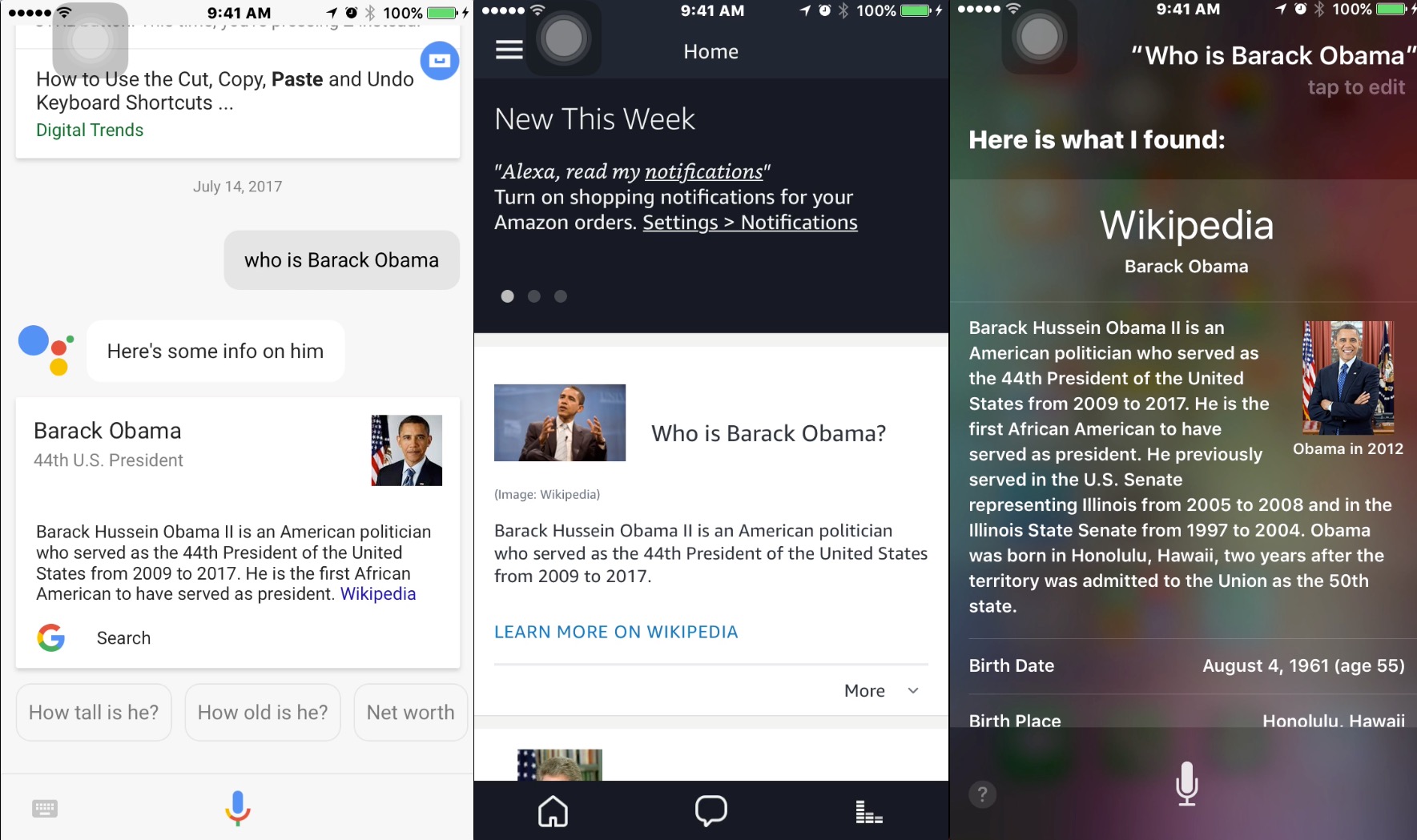

More recently, I have had the opportunity to explore a few of the now omnipresent virtual assistants built by the bigger tech players in the field. Amazon, Apple, Google, and Microsoft all have their own in-house virtual assistants. Of the aforementioned companies, I have engaged with Amazon’s Alexa, Google’s Assistant, and of course Apple’s Siri.

Each has its strengths and weaknesses, but they are all extremely valuable and functional tools that exhibit a great opportunity to be leveraged by people with disabilities to help us accomplish daily goals and overcome daily challenges.

Alexa specifically has become an invaluable part of my ecosystem. I use it on a daily basis to command my music library, check the weather and news headlines, and support me when I cook. In the kitchen, I have personally only used Alexa to set cooking timers, but it can also be used to check measurement conversions, add items to your shopping list, and with the right skills enabled, get food recipes, cocktail recipes, and control appliances such as your coffeepot and oven.

It can do all this without having to physically touch the hardware that hosts the platform. This is just one of the reasons artificial intelligence (AI) will continue to play an increasingly prominent role in everyday life, especially for those of us living with disabilities. Since Google and Amazon’s offerings are open-source, there are already dev shops seeking to leverage these programs specifically for this purpose.

One feature that would be an essential tool for people with memory loss, for example, was unveiled recently by Google, dubbed “proactive notifications.” This feature would allow Google Home (which provides the Google Assistant a standalone platform), to wake itself up without user engagement and to provide information such as traffic updates and changes in weather.

Such a feature exemplifies the extent to which technology can augment our lives for the better. While a person with a disability may or may not care about getting traffic updates before they are planning to travel, they may care more about tracking their diet, exercise, and medicine intake. Such proactive notifications could be extremely useful for anyone who has trouble “remembering to remember.”

Google Home seems to offer a particularly enticing platform for home aid use. Google has the benefit of already being nearly everywhere. Since the company started as a browser-based search engine rather than a hardware manufacturer, many of its services are thankfully still operating system-agnostic.

The fact that they still regularly release products and services on macOS, Windows, and iOS in addition to their mobile operating system Android, shows how open they continually strive to be. The vast amounts of users Google has amassed globally means there will not only be ongoing support for the platform but that the troves of behavioral user data the company has collected are actively being leveraged to make the Assistant smarter.

The Google Assistant is the most fun AI to talk to of the three I’m discussing in this article. I say that not only because it has the most expressive voice, but because it also has a few “party tricks” or “Easter eggs” up its sleeve. For example, if you ask it to sing a song, it will start beatboxing.

Likewise, if you have both a Google Home as well as an Echo device within earshot of each other and ask “Hey Google, can you say Alexa,” the Assistant and Alexa will talk to each other. Based on my personal experience, the two main drawbacks of Google’s offering are that you have to interact with the Assistant using full sentences.

This could make it difficult for a person with certain cognitive impairments to interact with it if they have difficulty conceptualizing full sentences in their mind before speaking out loud. The second drawback is a decreased sense of privacy.

Siri is a pretty good assistant. It can perform most of the basic functions I expect from a modern, AI-equipped virtual assistant, but in order to get the most out of it, you need to have a suite of Apple’s hardware. While you can access Alexa and Google Assistant on both iOS and Android, and Cortana on Windows and iOS, you must have an Apple device in order to engage with Siri.

I see this as Apple’s downfall in terms of the broader adoption of Siri as a full-fledged smart home assistant. Apple’s hardware is both too expensive and feature-restricted. If they want to continue penetrating culture and society deeper than they already have, they have to stop being so beholden to their aesthetics and wallet and give us users the features we want.

As I mentioned above, Amazon, like Google, benefits from being universal. Although Amazon has numerous in-house brands, products, and services, seemingly because of the company’s origins as an online retailer, they seem aware of how to navigate the waters of brand competition.

They are much more interested in getting their software into as many homes as possible rather than getting it there on their brand of hardware. Alexa was also the first mainstream AI-based virtual assistant developed open-source, meaning developed by a community of actual users rather than just Amazon’s own developers. This is a massive benefit that makes Alexa versatile and flexible. This also means that the community will likely continue to support a given piece of hardware long after Amazon abandons it.

While developers can implement Alexa on their own hardware, the company does offer a growing range of hardware platforms for their virtual assistant to live on. Some of these are offered at extremely competitive prices that undercut the competition. On this note, I should mention that while Google Assistant is my favorite assistant of the three I’ve mentioned, Amazon’s Echo Dot, costing a mere $50, is my favorite platform at the moment. This is because it is incredibly versatile than what the others offer right now.

It is small. If you’re a smart home beginner, it is very inexpensive. It offers a feature very important to me, that no other platform offers right now: due to the presence of an auxiliary port on the device, it can turn a wired speaker system into a wireless speaker system. This is handy if you want to have your speakers at a bit of a distance from the source of the audio or connect multiple audio sources that can be switched between.

This means that when I’m working at my computer, I don’t have to stop what I’m doing to control the music playing in the background. If I’m home but not near my computer, I can either ask Alexa to play a different artist, album, track, or playlist by name, or pull out my phone, launch Spotify, and play something from my collection that will then play over the speakers that I have plugged into the Dot.

Some broad benefits of building a home aid AI assistant atop a mainstream platform include the fact that a broad user base means the platform is more likely to be supported for a longer period of time than if it was a completely new, proprietary platform that didn’t end up getting adopted by enough people to make it financially viable. Additionally, the more people that use the platform, the more readily it can be iterated, updated, and improved.

Another benefit of using Alexa or Google Assistant is that they can already interact with a number of popular third-party services, such as Google Calendar or ridesharing service Lyft. This means there is potential for any home aid software built on one of these assistants to interact with these other third-party services.

More recently, Amazon and Google have both launched the ability to make audio calls through their respective virtual assistants. Lastly, all of the aforementioned virtual assistants have context awareness. This means you can ask it a question like, “When was Barack Obama president” and once it responds, you can follow up with a question such as, “When was his birthday?”

They understand the his you are referring to based on your previous question, which dramatically increases usability by making the verbal interaction more natural and human-like. Additionally, all the assistants I have mentioned can answer search queries such as “How many calories in a stalk of celery” or “What are the directions from home to JFK International Airport” in some capacity, although some provide more meaningful responses than others.

General drawbacks of building a home aid assistant on these virtual assistants include the heavy reliance on natural language voice user interfaces. While interacting with these assistants using voice is a benefit for many of us, there is a large portion of people in the disabilities community for whom this will be a dealbreaker.

As I mentioned earlier, some people with cognitive disabilities may have a difficult time forming a full sentence in their head before communicating verbally. This is important to note because while you can make a request to Siri or Alexa by saying for example, “Timer, 15 minutes,” With the Google Assistant, you must utter the full sentence, “Hey Google, set a timer for 15 minutes.”

Another drawback of virtual assistants in general is that in order to reap the most benefits out of any of them, you have to commit to a single manufacturer’s ecosystem. For example, in order to use Siri to stream a video you selected on your phone on your TV, you need to have an Apple TV.

Similarly, in order to use Google Assistant to do the same thing, you need a Chromecast device or a Chromecast-enabled TV. This is a barrier specifically for fans of Siri because all of Apple’s hardware is so expensive.

In the past two years or so we as a society have reached a tipping point that sees virtual assistants as not only commonly accepted but embraced. An ever-increasing number are people are viewing digital technology not as a luxury item but as a tool that can increase productivity or liberate some untapped potential.

This is an essential part of the maturation process in the vast field of emerging technology. In order for technologies such as virtual assistants to improve and grow, they have to be adapted, changed, and allowed to do things they weren’t necessarily designed or intended for, to begin with.

Sam Berman has two years of experience as an educator and advocate for people with disabilities, and in the aging community teaching computer literacy. In the spring of 2016, he attended a web development boot camp at the New York Code & Design Academy with the hopes of combining his passions for technology and advocacy into a career. As a consultant for Equal Entry, he taps into these experiences to make the web easier to use for people with disabilities.

Your post really helped me a lot to know more information about the people with disabilities. Use of artificial intelligence is indeed a good process to help them out. Integrating technologies for these types of betterment is actually a good process.